Autonomous Research Workflows: Leveraging Gemini Deep Research & MCP

Google's upgraded Gemini Deep Research Max brings MCP support, multimodal grounding, and native infographics, shifting AI research from chat summaries to infrastructure-grade pipelines.

Google has aggressively upgraded Gemini Deep Research, culminating in the launch of Deep Research Max. This update injects major workflow-relevant capabilities into the API, completely redefining how system builders construct research agents.

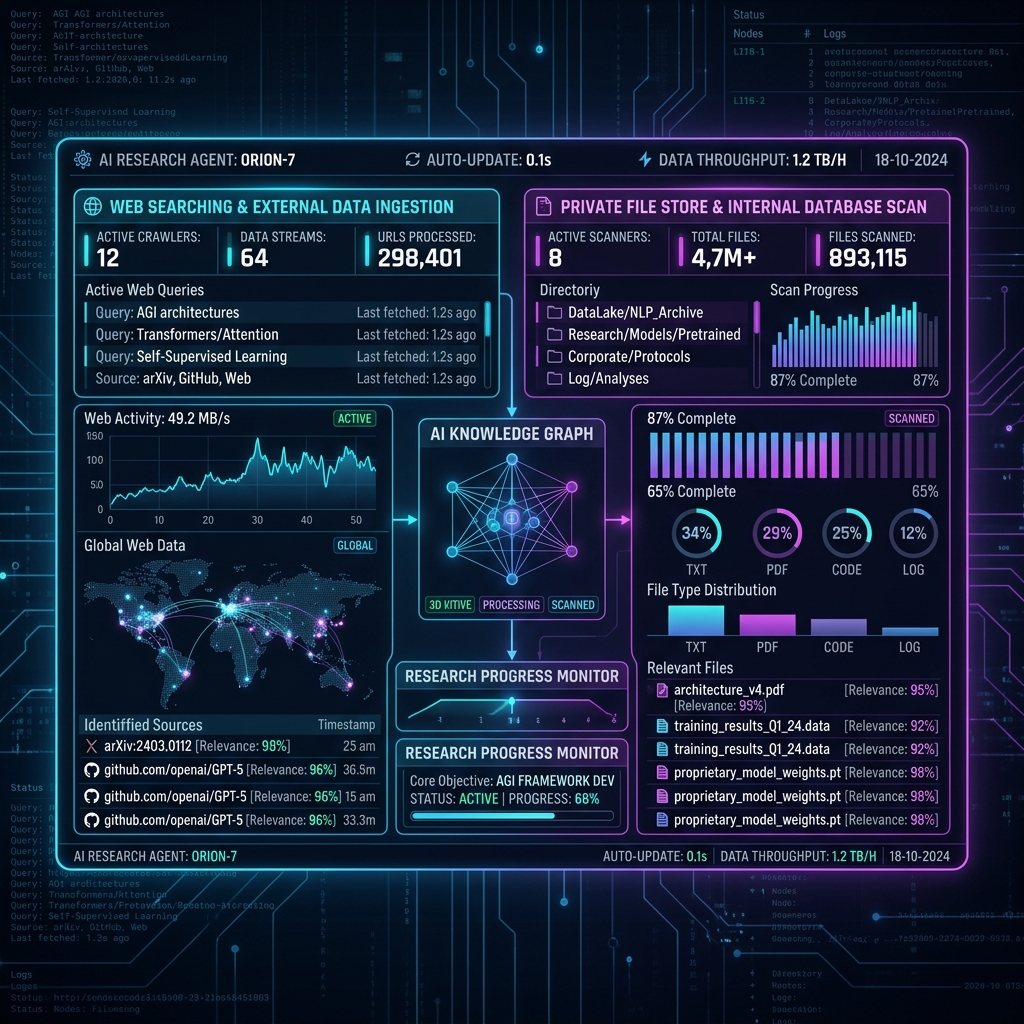

By unifying Model Context Protocol (MCP) support, explicit File Search parameters, collaborative planning features, and native chart generation, Google has signaled that research agents are no longer conversational toys—they are critical production infrastructure.

What Google Actually Launched

Currently available in public preview via the Gemini API (Interactions API), developers now possess two architectural tools:

- Deep Research: Highly optimized for interactive speed and immediate efficiency.

- Deep Research Max: Engineered for absolute maximum comprehensiveness, long-running synthesis jobs, and extremely heavy offline reasoning.

The 5 Capabilities That Matter Most to Builders

For developers mapping out AI-assisted pipelines, the true value of this release is not the LLM's IQ, but its orchestration integrations:

- MCP Support: Seamlessly connect internal company data repositories, bespoke databases, and professional tools without fabricating enormous custom retrieval pipelines.

- File Search: Directly attach internal enterprise file stores for context.

- Collaborative Planning: The agent proposes an exhaustive research strategy before executing massive token-intensive sweeps, allowing user intervention and steering.

- Native Charts & Infographics: Embedded Code Execution interprets raw data arrays into rendered charts within the final output payload.

- Multimodal Grounding: Absolute confidence synthesis merging web search with private PDFs, internal CSVs, audio recordings, and video files.

Deep Research vs. Deep Research Max

Architectural decisions dictate utilizing these variants based purely on cost vs. latency trade-offs:

- Deep Research thrives in interactive surfaces, user-facing copilots, and rapid ad-hoc analyst requests where a 10-second wait is the absolute ceiling.

- Deep Research Max is the backend powerhouse. Schedule it as a cron-triggered background job at 2:00 AM to perform extreme market due diligence, nightly research briefs, or comprehensive codebase security audits.

Mapping a Practical Workflow Pipeline

A standard production workflow utilizing this API architecture looks like this:

- Step 1: The user submits a complex research intent query.

- Step 2: The API returns a generated collaborative plan; the operator adjusts parameters.

- Step 3: The Agent autonomously executes across the live web while simultaneously querying isolated internal sources via MCP.

- Step 4: File Search aggregates historical evidence from uploaded corporate document stores.

- Step 5: Code execution kicks in, interpreting numerical trends and rendering visual radar charts.

- Step 6: A heavily cited, formatted report is beamed automatically into a Slack channel, CMS, or executive dashboard.

Operational Risks to Consider

While incredibly potent, merging broad web searching with proprietary private data generates governance risks. System architects must curate MCP data connections cautiously to prevent hallucinations bleeding into sensitive internal metrics. Furthermore, despite its thoroughness, the "Deep Research" tag should not bypass human review loops in high-stakes environments such as legal diligence or heavy procurement.

Builder Tip: Stop thinking in simple prompt engineering patterns. Treat Deep Research as an asynchronous data engineering pipeline. Anchor its outputs tightly with internal taxonomies to ensure that the retrieved quality scales with your business logic.