OpenAI Codex Plugins and Multi-Agent Workflows — When Coding Agents Become Operations Infrastructure

Codex is no longer a chat assistant for developers. With first-class plugins, path-based sub-agents, and structured messaging — Codex is evolving into a workflow layer for multi-agent software delivery. Practical analysis for engineering teams.

Codex Is No Longer a Chat Assistant

If you still think OpenAI Codex is just "ChatGPT that writes code" — you're missing a critical shift.

In March 2026, OpenAI shipped a cluster of updates that transform Codex from a coding assistant into a workflow layer for multi-agent software delivery:

- Plugins became a first-class workflow

- Sub-agents got clear path-based addressing and structured inter-agent messaging

- Cloud handoff, GitHub review workflows, and automations were significantly enhanced

This article analyzes what actually changed and how engineering teams can operationalize Codex as infrastructure — not just a chat tool.

What Changed in Codex — March 2026

Plugins — First-Class Workflow

Plugins are no longer add-ons. Codex now supports:

- Syncing product-scoped plugins at startup

- Browsing, installing, and removing plugins from a directory

- Plugins bundling skills, app integrations, and MCP/server configuration

In plain English: Instead of copying prompts into a coding tool each time, you package workflows into reusable plugins.

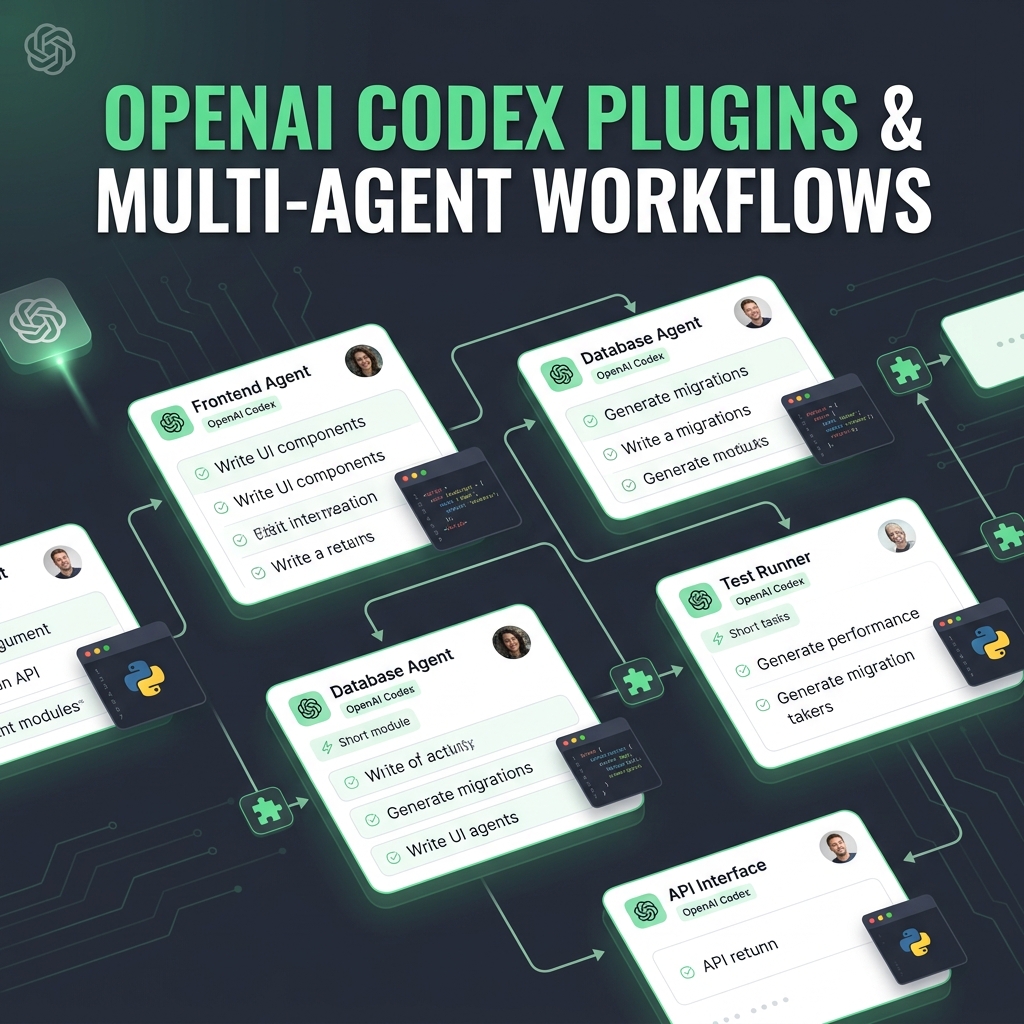

Sub-Agents — Path-Based Addressing

Sub-agents now have clear path-based addresses (e.g., agents/frontend, agents/database), supporting:

- Structured inter-agent messaging

- Agent listing for multi-agent v2 workflows

- Parallel task distribution across specialized agents

Cloud Handoff & GitHub Review

- Cloud handoff: Delegate heavy tasks to cloud environments, continue working locally

- GitHub review mode: Codex reviews PRs, suggests fixes, drafts comments

- Automations: Triage, CI/CD, and recurring maintenance tasks

Why Plugins Matter More Than They Sound

| Old Workflow | New Workflow with Plugins |

|---|---|

| Copy prompt into tool each time | Install plugin, rerun anytime |

| Each dev has different setup | Plugin syncs conventions across team |

| Setup drift between projects | Plugin packages reusable across repos |

| Manual MCP config | Plugin bundles MCP + app integrations |

Who benefits most:

- Agencies — productize delivery workflows as plugins for clients

- Internal platform teams — standardize AI development workflows

- Devtool-heavy startups — reduce onboarding time for new developers

The Real Story: Multi-Agent Orchestration

Plugins matter, but multi-agent orchestration is the paradigm shift.

Example: 4 Parallel Agents

| Agent | Role | Input | Output |

|---|---|---|---|

| 🔍 Research Agent | Survey codebase, find patterns | Repo context | Architecture notes |

| ✍️ Write Agent | Write/refactor code | Architecture notes + task spec | Code changes |

| 🧪 Test Agent | Write tests, run reviews | Code changes | Test results + review |

| 📝 Docs Agent | Update docs, release notes | Code changes + test results | Documentation |

Why This Beats One Mega-Agent

- Reduced context overload — each agent only holds necessary context

- Parallel execution — Research Agent finishes → Write + Test Agents run simultaneously

- Easier debugging — you know exactly which agent failed, at which step

- Reusable — Test Agent works across any project

A Practical Operating Model for Teams

5-Layer Architecture

┌─────────────────────────────────────────┐

│ LOCAL ENV Interactive work │

├─────────────────────────────────────────┤

│ CLOUD HANDOFF Long-running tasks │

├─────────────────────────────────────────┤

│ GITHUB REVIEW PR feedback & fixes │

├─────────────────────────────────────────┤

│ PLUGINS Standard workflows │

├─────────────────────────────────────────┤

│ AUTOMATIONS Triage, CI/CD, maint │

└─────────────────────────────────────────┘

Sample Weekly Workflow

| Day | Developer | Codex |

|---|---|---|

| Monday | Code locally, assign refactor task | Delegate refactor to cloud |

| Tuesday | Review cloud output, merge | Automation checks PR quality |

| Wednesday | Design new feature | Research Agent surveys affected files |

| Thursday | Implement feature | Write Agent + Test Agent run in parallel |

| Friday | Final review, deploy | Docs Agent updates changelog, release notes |

Codex Vs. the Coding Agent Market

| Type | Examples | Strength | Limitation |

|---|---|---|---|

| Chat copilots | GitHub Copilot inline | Fast, low friction | Can't orchestrate |

| Autonomous agents | Devin, SWE-Agent | End-to-end tasks | Hard to govern |

| Workflow systems | Codex (new) | Plugin + multi-agent + review | Ecosystem still new |

Key insight: Workflow packaging + multi-agent coordination is a more durable moat than raw model quality alone. Teams should evaluate orchestration, review quality, and deployability — not just benchmark scores.

Limitations and Adoption Risks

- Plugin governance — Who writes plugins? Who reviews them? Permission design matters

- Parallel agent complexity — Multiple agents need clear boundaries

- Ownership conventions — Teams need rules: who owns which agent's output?

- Security & auditability — More automation means stricter logging and sandboxing

- Plugin ecosystem — Still early, limited quality community plugins

Who Should Pay Attention Now

| Group | Why |

|---|---|

| Teams using coding assistants feeling scaling friction | Plugins reduce setup drift, multi-agent reduces context overload |

| Small startups shipping fast | 1 dev + multi-agent = output of a 3-4 person team |

| Platform/DevEx teams | Standardize AI workflows org-wide |

| Consultants/Agencies | Productize delivery as reusable agent workflows |

Takeaway

Codex is shifting from assistant UX to agent operations infrastructure. Start experimenting with:

- One reusable plugin — package your team's conventions/workflow

- One parallel workflow — Research Agent + Write Agent for a specific task

The biggest win isn't "write code faster" — it's turning repeatable engineering work into systems.

Sources: OpenAI Codex Changelog · OpenAI Codex Product