We Tested 15 AI Coding Agents in 2026 — The 5 Criteria That Actually Matter

Benchmarks aren't what you should use to choose an AI coding agent. After testing 15 tools, Morph identified 5 criteria developers rank highest: cost efficiency, productivity impact, code quality, repo context, and privacy. Analysis of The Big Three and a decision matrix by team profile.

Benchmarks Aren't What You Should Use to Decide

Numbers from Morph (March 2026) after testing 15 AI coding agents: 42% of new code is now AI-assisted. But the same Opus 4.5 model running in different agents produced results 17 problems apart on SWE-bench Verified. Scaffolding matters more than the model.

Most comparisons lead with benchmark scores. Benchmarks are one signal among several. After talking to developers using these tools daily, 5 criteria come up consistently — in the order developers rank them, not the order marketing teams prefer.

5 Criteria Developers Actually Care About

1. Cost / Token Efficiency (Criterion #1)

"Which tool won't torch my credits?" — this is the first question developers ask, not benchmark scores. Cost is the top criterion on every developer forum.

2. Real Productivity Impact

Time saved per week versus previous tools. Not theory — developers want to know how many PRs merge faster, how many review cycles are eliminated.

3. Code Quality & Trust

Percentage of output requiring significant rework. Developer trust — how often you can accept a suggestion without reading every line carefully.

4. Repo Understanding & Context

What context window size actually means in practice. A 200K context window agent is fundamentally different from 8K in real codebase navigation.

5. Privacy & Data Control

Who has access to the code you send. Especially critical for enterprise and regulated teams.

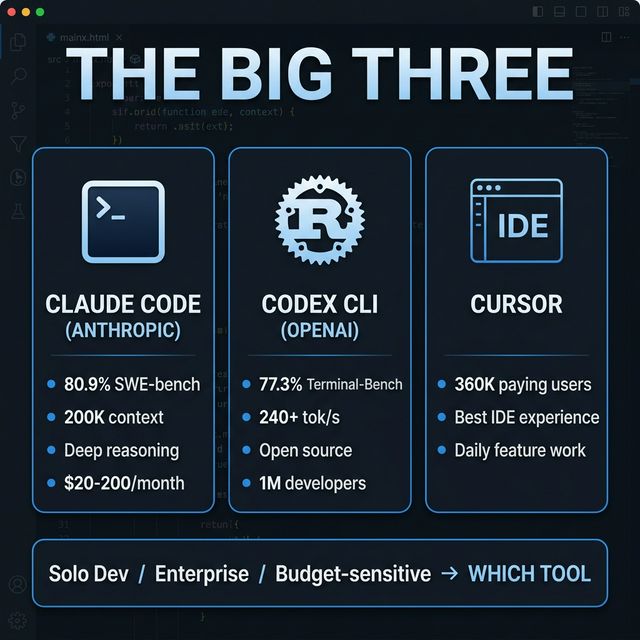

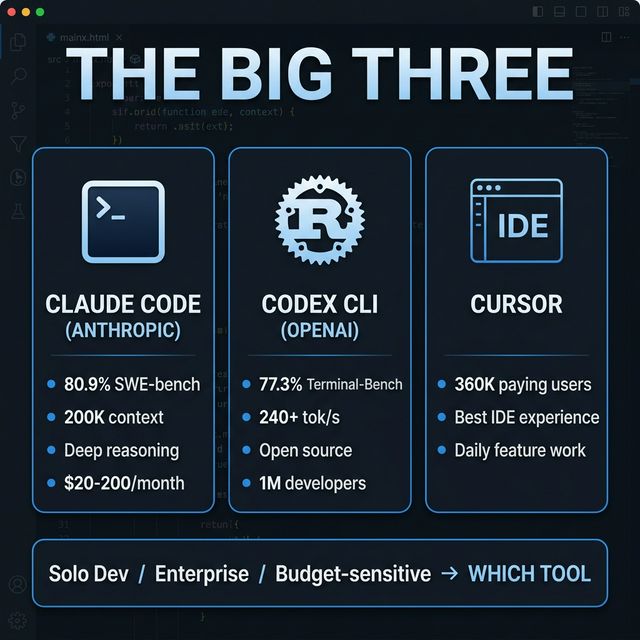

The Big Three

These three tools have the largest active user bases, the highest capability scores, and the most developer mindshare. If you're choosing today, your choice is almost certainly among these three.

Claude Code (Anthropic) — Best for Hard Problems

Best if: you want the deepest reasoning on hard problems and prefer working in the terminal.

Claude Code is Anthropic's terminal-native agent. Per SemiAnalysis, it has hit $2.5 billion ARR and accounts for over half of Anthropic's enterprise revenue — not marketing hype, but thousands of engineering teams paying $100-200/month per developer because the tool saves more than it costs.

Verified highlights:

- Opus 4.5: 80.9% SWE-bench Verified — highest of any model

- 200K token context window — entire codebases in working memory

- Auto-compaction for coherent long sessions

- Terminal with direct access: shell, file system, dev tools

- February 2026: Agent Teams for multi-agent coordination + MCP server integration

What developers rank highly:

- "The tool I reach for when other tools fail"

- Multi-file refactors, unfamiliar codebases, architectural bugs — this is the sweet spot

- Common pattern: Cursor/Copilot for daily feature work → Claude Code when hitting hard problems

What the community complains about:

- Cost: Starts at $20/month, heavy usage (Opus models) → $150-200/month. Billing is opaque — developers report surprise API bills.

- Rate limits: Even at $200/month Max plan, you're buying more throttled access, not real control. One developer: "The rate limits are the product. The model is just bait."

- No free tier. Every other competitor offers some free path.

Honest verdict: Most capable agent for hard problems, but most expensive. If your work regularly involves problems where other tools give up → cost is justified. If you primarily write straightforward features → you're overpaying.

Codex CLI (OpenAI) — Best for Speed & Openness

Best if: you want speed, open source, and the highest Terminal-Bench scores.

Codex CLI is OpenAI's open-source terminal agent, built in Rust. 1 million developers in its first month. Backed by the GPT-5.x family.

Verified highlights:

- 77.3% Terminal-Bench — highest terminal-based task performance

- 240+ tokens/second generation speed

- Open-source Rust codebase — extensible

- GPT-5.x models

When Codex CLI wins:

- Speed matters more than reasoning depth

- You want to extend and customize the agent

- Budget-conscious but need a strong terminal agent

- Terminal-Bench tasks (speed-oriented development)

Cursor — Best IDE Experience

Best if: you live in an editor and want polish for daily feature work.

360K paying customers. Entered the IDE AI editor category early and still leads on UX.

Verified highlights:

- 360K paying users — proves real product-market fit

- Polish and UX — no competitor comes close for IDE experience

- Most developers who try Cursor don't go back to vanilla VS Code

Honest limitation:

- Multi-file editing is less reliable than Claude Code

- Pricing trust issues — community complaints about unexpected billing changes

- Developers "outgrow" Cursor, typically moving to Claude Code or Codex CLI

Strong Alternatives — Not Second Tier

These tools are not second-tier. Each one is the right choice for a specific workflow or constraint.

| Tool | Best for | Pricing | Key stat |

|---|---|---|---|

| Windsurf | Best value among paid IDEs | Free / $15 / $30 / $60 | #1 LogRocket rankings; Google acquired ~$2.4B |

| Cline | Full model freedom, zero markup | Free (BYOM) | 5M VS Code installs |

| GitHub Copilot | Safe default, any IDE, $10/month | $10/month | 15M developers |

| Devin | Handing off entire tasks | $20 + $2.25/ACU | 67% PR merge rate; dropped from $500→$20/month |

Windsurf: Wave 13 introduced 5 parallel Cascade agents via git worktrees. Arena Mode runs two agents on the same prompt with hidden model identities — you vote on performance. Memories feature remembers codebase context. Best value per dollar according to community consensus.

Cline: BYOM with no markup. 5M VS Code installs. Dual Plan+Act modes. Running Claude Sonnet 4.6 via Cline: ~$3-8/hour for heavy usage.

Copilot: Reliable, low-friction, works everywhere (VS Code, JetBrains, Xcode, Neovim). Agent Mode with MCP support. Free tier for students/open-source. Limitation: multi-file editing less reliable than Cursor.

Devin: Most autonomous. Sandboxed cloud environment with its own IDE, browser, terminal. Devin 2.0 with Interactive Planning and Devin Wiki (auto-indexes repos). 67% PR merge rate on well-defined tasks. ~85% failure on complex/ambiguous tasks without intervention.

Decision Matrix by Team Profile

| Profile | Primary tool | Terminal agent | Backup |

|---|---|---|---|

| Solo dev / startup | Cursor | Codex CLI | Copilot ($10/month everywhere) |

| Enterprise / regulated | GitHub Copilot | Claude Code | Windsurf |

| Heavy refactor work | Claude Code | Codex CLI | — |

| Routine features, high volume | Cursor or Windsurf | Codex CLI | Copilot |

| Budget-sensitive | Windsurf or Cline (BYOM) | Codex CLI | Copilot free tier |

| Full task delegation | Devin (well-defined tasks only) | Claude Code | — |

Practical Evaluation Checklist

3-task test battery (run with every agent you're considering):

Task 1 — Bug fix: A real bug in your actual codebase. Not toy examples.

- Metric: Fix time + number of revisions needed

Task 2 — Refactor: Refactor a complex module (multi-file, cross-dependencies).

- Metric: Correctness post-refactor + review burden

Task 3 — Test writing: Write tests for existing code with moderate complexity.

- Metric: Coverage quality + number of edits needed

Metrics to track:

- Time saved per task (honest measurement)

- Review burden: % of output needing significant changes

- Token spend: $ cost per task

- Failure rate: % of times you had to abandon agent output entirely

Cost Reality: Hidden Billing + Rate Limits

The real cost of BYOM tools: BYOM (Bring Your Own Model) tools are "free" but your API bill is not. Running Claude Sonnet 4.6 through Cline or Kilo Code costs roughly $3-8/hour for heavy usage at current API rates. Running Opus is 5-10x more. The advantage of BYOM is control and provider flexibility — not that it's cheaper.

Estimated monthly costs by tool (March 2026):

| Tool | Light use | Heavy use |

|---|---|---|

| Claude Code | $20/month | $150-200/month |

| Codex CLI | Pay-per-token | Depends on usage |

| Cursor | $20/month | $20/month (flat) |

| Windsurf | $15/month | $15-30/month |

| Cline (BYOM) | $5-15/month API | $50-100/month API |

| Copilot | $10/month | $10/month (flat) |

Final Verdict

The honest answer for most teams: use more than one.

- Cursor or Windsurf as your daily IDE agent

- Claude Code or Codex CLI as your terminal agent for hard problems and automation

- Copilot as the $10/month safety net that works everywhere

The model routing consensus the developer community has settled on — Claude for depth, GPT-5.x for speed, cheap models for volume — applies to agents too.

Source: We Tested 15 AI Coding Agents (2026). Only 3 Changed How We Ship. — Morph, March 2026